Stable Diffusion is still usable. But it's not as hot as it used to be.

I first got into SD back in 2023, and at that time, installing it locally, downloading models, and training LoRA felt like something special. There was a certain cool factor in saying "I run AI images myself." But looking back now... honestly, it's a hassle. The installation is complicated, you get errors from not having enough VRAM, and I've even lost sleep from the computer fan noise while training.

So these days, sometimes I think "is it really worth it?" Of course, SD has its own clear advantages, but it's not for everyone.

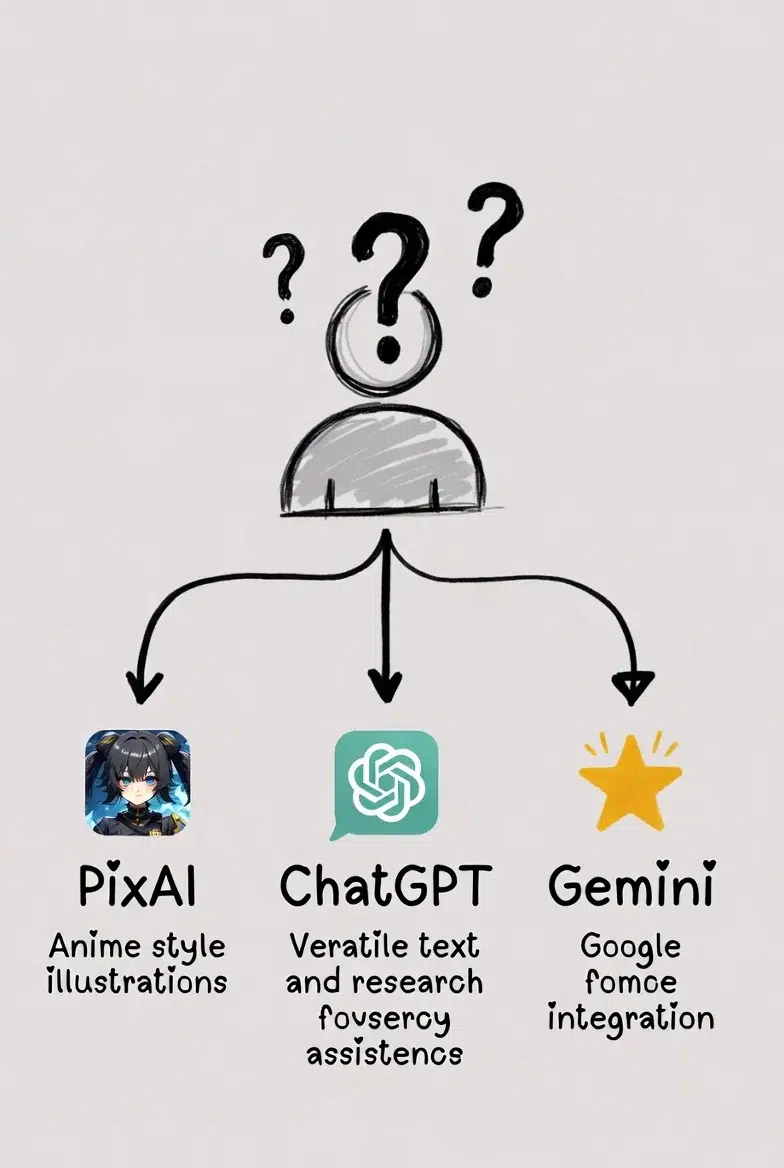

I looked at some alternative platforms and put together a summary based on my experience.

Why Look for Alternatives

SD's core advantage is open weights. You can fine-tune with your own data to create the style you want. That's genuinely powerful.

But to use it properly:

First, you need to understand what model training is. I was stuck from the start with questions like "What's an epoch?" and "What should I set the batch size to?" I tried following along with YouTube videos, but some things didn't work because the versions were different. It took weeks of trial and error before I got something decent.

The setup is surprisingly tricky too. Python versions, CUDA versions, package conflicts... Once things get tangled up, sometimes you have to wipe everything and reinstall. I once wasted 2 hours trying to install xformers.

Training time is also an issue. Training a LoRA with 20 images took about 40 minutes on my 3060 Ti. But when I didn't like the results, I'd change the parameters and run it again, then change them again and run again... The whole day would just disappear like that.

So for those thinking "Is there no other way?", here are three alternatives.

PixAI: Great Usability and Specialized for Image Generation!

When you visit the PixAI.art site, the first impression is unmistakable. It's completely anime-specialized. The main page is full of illustrations, and it has a strong community vibe.

What surprised me most about this platform was the LoRA training. All that struggling I did locally before... here, you just upload images, give them a name, wait, and it's done. I was like, "Wait, this actually works?" I felt a bit let down when I first tried it. What had I been doing all this time?

Of course, you can't do detailed parameter adjustments. You can't change the learning rate like in local training. But in most cases, that level of control is sufficient. At least for someone like me who just wants "something roughly in my character's style."

There are tons of community models too. You can use models that others have trained, so even without training your own, you can find the style you want and start generating right away. These days, I sometimes visit just for the fun of browsing models.

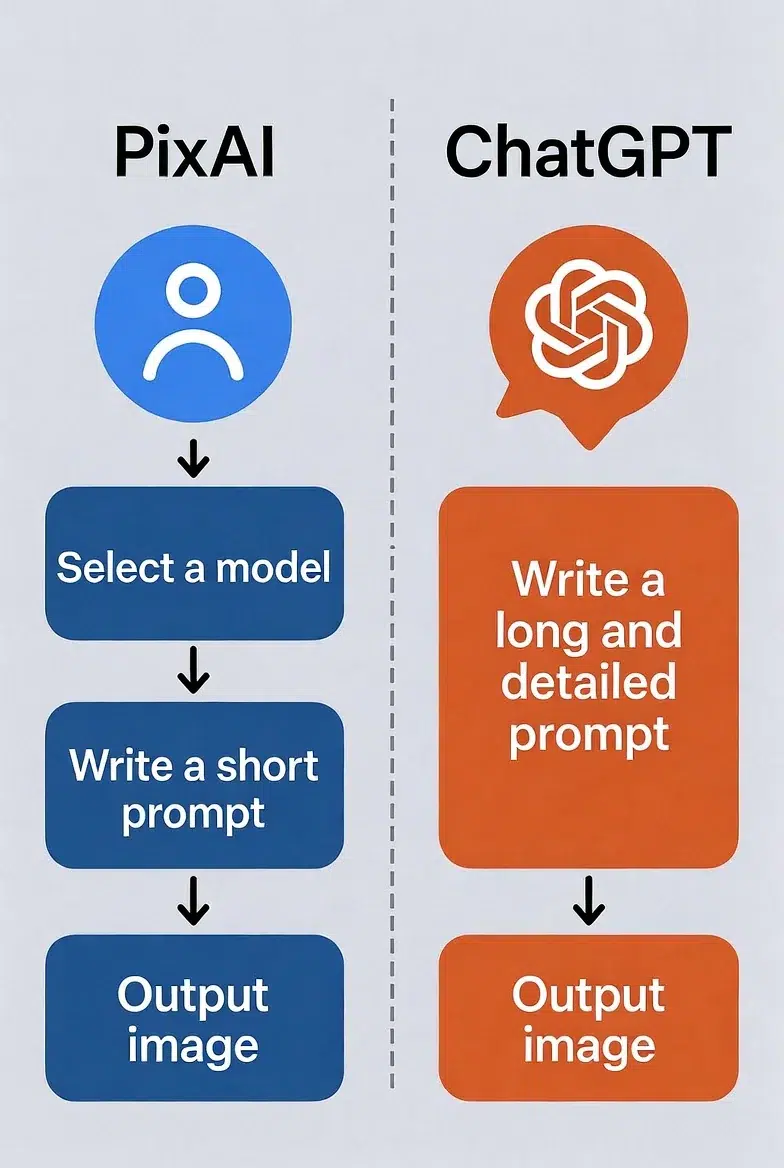

Prompts can be short too. For example:

masterpiece, kung fu, green qipao, black bob hair, dynamic pose

Even with just that much, the style is already built into the model, so it automatically comes out looking good. If you try to get the same thing from ChatGPT, you'd need at least three lines just for the style description.

As for downsides... it's a bit weak outside of anime/illustration. Photorealistic images or landscape paintings are better done elsewhere. Also, free credits are limited, so you need to pay if you want to use it a lot. I got a monthly subscription, and considering how much I use it, it's not a bad deal.

Oh, writing about this makes me want to try LoRA training again. Haven't done it in a while.

ChatGPT: Use It Occasionally, But Prompts Get Long

ChatGPT needs no introduction. The image generation feature is based on DALL-E 3, and the quality is better than you'd expect.

But there's a reason I don't use it often. Specifying styles is too cumbersome.

With PixAI, you select a model and the style is automatically applied. ChatGPT doesn't have that. If you want an anime style, you have to describe it yourself.

Here's the prompt I once used trying to create a character in a cheongsam:

A confident anime girl wearing an elegant red and gold cheongsam

with intricate dragon embroidery, high slit design.

Long black hair with crimson highlights.

Sharp eyes and a slight smirk.

Background: lantern-lit street at night with bokeh lights.

Cinematic lighting, dramatic shadows.

Ultra-detailed anime style, sharp line art, vibrant colors,

smooth cel shading, 4k resolution.

Long, right? If you don't write all that, you just get a generic style. Having to write this every time... honestly, it's a pain.

Still, there are times when it's useful. Before creating an image, you can ask things like "What's this pose called?" You can look up the name of a specific martial arts move and put it in the prompt for more accurate results. This kind of integrated research + generation is convenient.

And when trying photorealistic or non-illustration styles, ChatGPT can sometimes be better. Oil painting style, poster design, and so on. PixAI is a bit weak outside of anime.

Gemini: Honestly, Not Great

Gemini can generate images too. It's based on Google's Imagen model.

But honestly... the quality is a bit lacking. Even with the same prompt, PixAI and ChatGPT produce more refined results. Style consistency is weak too.

From my experience, it's a bit awkward to use solely for image generation.

The only time it's really useful is when you're writing in Google Docs and think "Oh, I should add an image here." You can create one without switching tabs, which is convenient. When quick work matters more than perfect quality.

They say the video generation feature (Veo) integration is also an advantage, but I haven't tried that yet.

For me, it's just a "use it occasionally if it's there, no big deal if it's not" kind of thing.

So What Do I Use These Days

I have all three installed, but honestly, I open PixAI the most. I handle about 80% of my work there. Short prompts are fine, choosing models is fun, and the results are generally satisfying.

ChatGPT is for when I need research or want to try non-anime styles. Maybe a few times a month?

Gemini... really rarely. Only when I'm in a rush in Google Docs. In the past month, did I use it once? Twice?

I only fire up local SD when I need complex stuff like ControlNet or IP-Adapter. These days, I barely turn it on. Electricity costs add up too.

Personally, I think PixAI offers the best value for money. Especially if you mainly work with anime/illustration styles. It's much better than struggling to set up local SD.

Of course, for those who need ControlNet workflows or full customization, SD is still the answer. There's no replacing that.

Fact-Checking the Original

The original text mentions model names like "Nano Banana Pro," but I couldn't verify if this is an actual model. Nothing comes up in searches. Gemini's image generation is known to be based on the Imagen family, and ChatGPT uses DALL-E 3. Whether the original author was confused, used some internal code name, or it was a hallucination from AI-generated writing, I'm not sure.

"GPT-Image-1.5" also doesn't seem to be a real model name. I just ignored these parts and organized everything based on actual information.