UnHype: CLIP-Guided Hypernetworks for Dynamic LoRA Unlearning Paper Review

UnHype: CLIP-Guided Hypernetworks for Dynamic LoRA Unlearning

Recent image generation AI models like Flux and Stable Diffusion demonstrate remarkable performance. However, where there is light, there are shadows. The ability to generate violent or explicit images, or to reproduce copyrighted characters with striking accuracy, has been consistently raised as a concern.

Of course, developers are not standing idly by. They apply Machine Unlearning techniques to teach the AI "don't draw such images." But there's a critical dilemma here: when you modify the model to erase harmful content, you often damage its ability to create perfectly normal images as well.

The paper I'm introducing today, 'UnHype', addresses this dilemma in a very clever way. Instead of directly performing surgery on the model's brain, it uses a 'dynamic filter' that operates differently depending on the situation. Personally, I think this is the most practical approach among recent generative AI control papers I've read, which is why I'm sharing it.

Limitations of Existing Methods: Burning Down the House to Catch a Bug

Making AI forget specific concepts (e.g., 'violence' or a certain celebrity's face) is harder than it sounds. AI knowledge is intricately connected like a spider's web.

Previously, the main approach was to directly modify the model's weights. However, this method frequently causes a side effect called 'Catastrophic Forgetting'. For example, when training the AI to erase the concept of 'nude,' it might start blurring all images containing skin tones or become unable to properly draw human arms and legs.

Additionally, retraining the entire model every time a new target needs to be erased is inefficient in terms of time and cost. If you need to protect thousands of celebrity faces, you might need thousands of tuning sessions.

UnHype's Core Idea: Keep the Model Intact, Just Swap the Filter

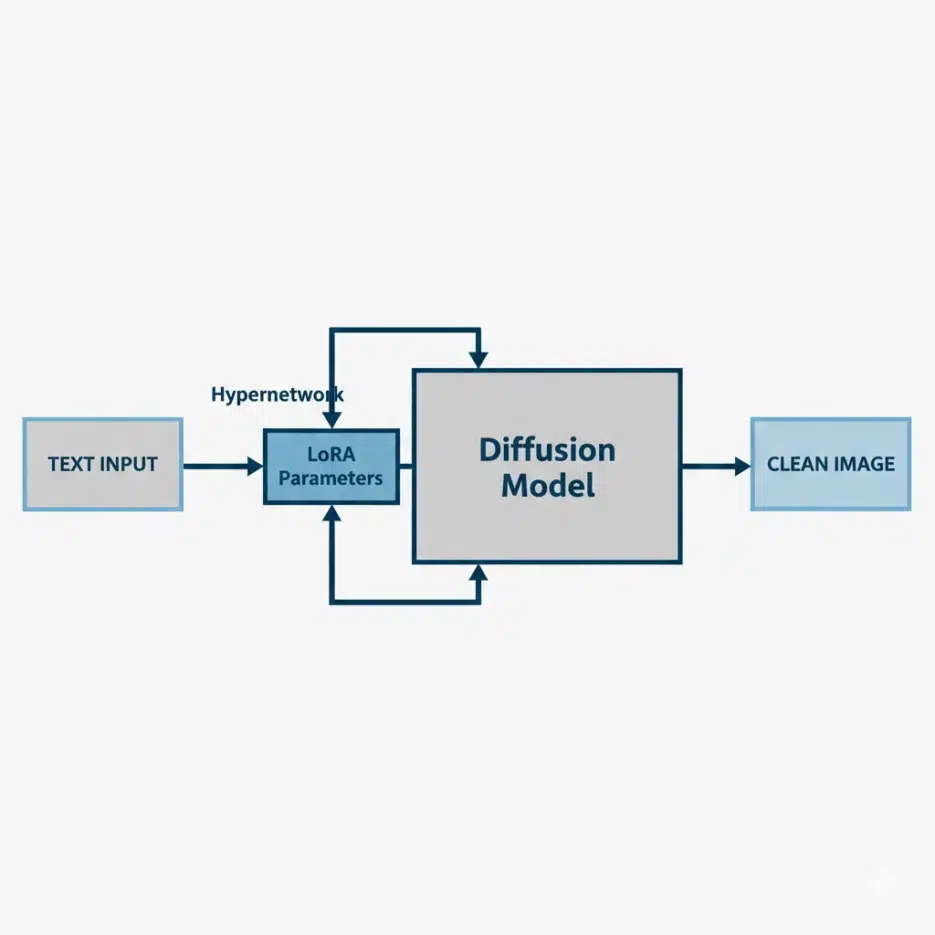

The researchers determined that modifying the model itself is risky. So they introduced Dynamic LoRA using Hypernetworks technology.

Though it sounds like a complex term, the principle is intuitive enough for a middle schooler to understand.

1. Enter the Manager (Hypernetwork)

In the UnHype system, there's a smart manager standing beside the artist (Diffusion Model) who draws pictures. When a user enters a prompt, the manager reviews the content before the artist starts drawing.

2. Creating Situation-Specific Glasses (LoRA)

The manager analyzes the input sentence and creates appropriate 'glasses (LoRA adapters)' on the spot to put on the artist.

- Normal requests ("draw a cat"): The manager puts on transparent glasses with no distortion. The artist draws with their original ability.

- Prohibited requests ("draw celebrity XXX"): The manager creates and puts on special glasses that make only that celebrity's face appear blurry or different.

The key is that these glasses are not pre-made, but generated in real-time (Dynamic) based on the input sentence. This allows a single manager model to simultaneously control hundreds or thousands of prohibited terms.

What the Experimental Results Show

The paper applied this technology to the latest models, Flux and Stable Diffusion, and conducted three experiments.

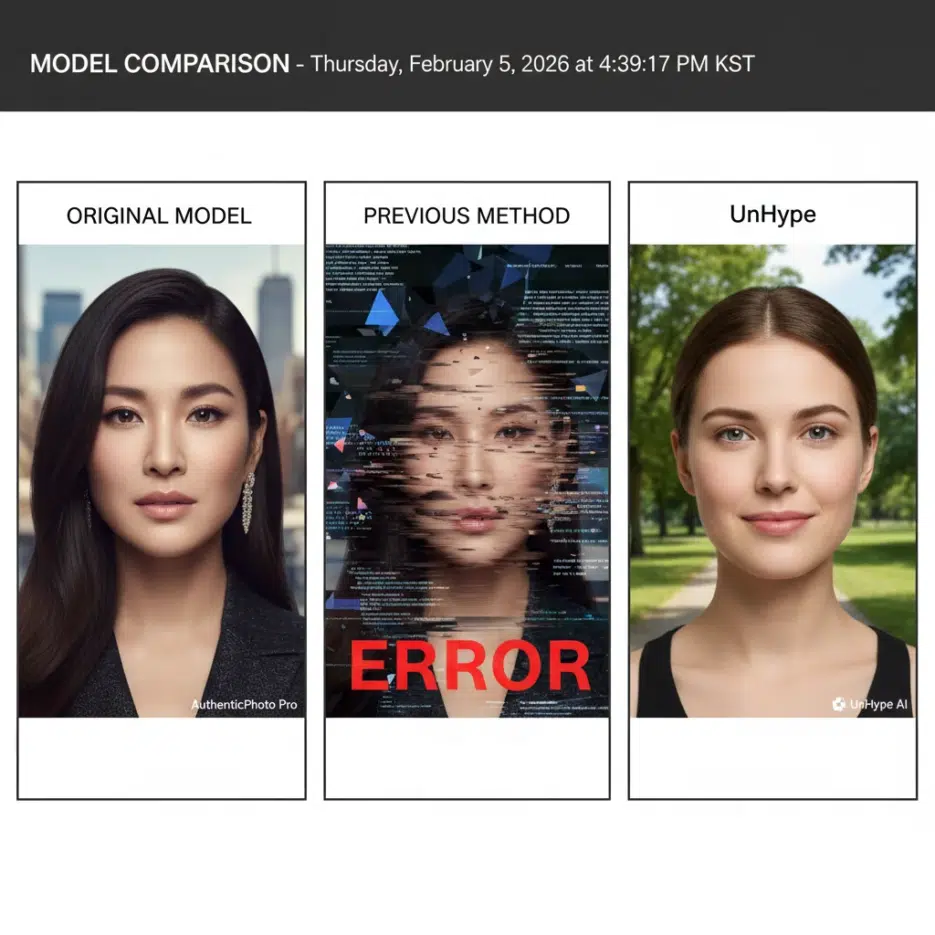

1. Object Erasure When preventing the model from drawing specific objects like 'airplane' or 'church,' existing methods (ESD, MACE) often blurred the background or created random noise. In contrast, UnHype cleanly removed or replaced only the target object while maintaining the overall image quality.

2. Blocking Explicit Content This is the most important part. In experiments blocking attempts to generate inappropriate images (I2P benchmark), UnHype showed near-perfect defense rates. More surprisingly, the general ability to draw 'people' was preserved.

3. Large-Scale Person Protection The researchers conducted an experiment erasing 100 celebrity faces simultaneously. A single UnHype model successfully prevented the generation of all 100 faces. What previously would have required creating or managing 100 separate LoRAs was solved with one model.

Why This Technology Matters

The implications of this paper go beyond simply "doing censorship well."

First, efficiency. As AI models grow larger, retraining costs increase exponentially. If you can control the model with a lightweight external module like UnHype, you can dramatically reduce service operating costs.

Second, flexibility. This technology can be applied regardless of architecture, from older models like Stable Diffusion 1.4 to the latest models like Flux. It's like a safety device that can be plugged in anywhere like a USB peripheral.

Third, quality preservation. It proved that you don't have to sacrifice performance for safety.

Core Logic Summary for Developers

If you were to implement this technology in code, it would roughly flow like this. (This is pseudocode for understanding purposes.)

import torch

from unhype import HyperNetwork, DiffusionModel

# 1. Prepare the manager (Hypernetwork) and artist (Diffusion Model)

clip_encoder = load_clip_model()

hyper_net = HyperNetwork.load("unhype_weights.pt")

pipe = DiffusionModel.load("stable-diffusion-xl")

# 2. Prompt comes in

prompt = "photo of Angelina Jolie" # Target to erase (celebrity)

# 3. Manager's judgment (the key!)

# Analyze the meaning (Embedding) of the prompt

prompt_embedding = clip_encoder(prompt)

# 4. Generate LoRA weights on the spot

# Instantly calculate weights that neutralize the concept of "Angelina Jolie"

dynamic_lora_weights = hyper_net(prompt_embedding)

# 5. Put glasses on the artist

pipe.apply_lora(dynamic_lora_weights)

# 6. Generate image

image = pipe.generate(prompt)

# Result: Generates a photo of a generic person who looks nothing like Angelina Jolie

The existing method required loading files separately like pipe.load_lora("angelina_eraser.safetensors"), but the key with UnHype is that dynamic_lora_weights changes in real-time based on the prompt.

Limitations and Caveats

Nothing is perfect. UnHype is not omnipotent either. If you cleverly rephrase things—like when told to erase "blue," it might still draw "sky blue"—it can be bypassed. However, it's much more defensive than existing methods.

Additional Computational Cost: Since the manager has to inspect every prompt and create glasses each time, generation speed may be slightly slower. But compared to the cost of retraining the entire model, it's practically free.

Conclusion

UnHype presented a method to selectively and effectively control what 'should be forgotten' from data that AI has indiscriminately learned.

In the future, whenever AI copyright issues or ethical concerns flare up, instead of discarding the entire model or rebuilding from scratch, technologies like UnHype are expected to play the role of problem-solver. From a developer's perspective, it's an excellent tool that provides safety measures without worrying about model performance degradation.

I believe this is an important step forward, not just toward AI that draws well, but toward AI that can safely coexist within the norms of human society.