I was generating images on Midjourney at 3 AM when I suddenly got curious. "How does this thing read the prompts I write in English and draw pictures?" I'd spent 3 hours just tweaking prompts that day. Adding "cinematic lighting" then removing it, adding "8k ultra detailed" then removing it. But I'd never once looked into what's actually going on behind the scenes.

Then I started feeling uneasy and began digging through papers, and that's where I lost about two days.

The Embedding Trick

What I originally assumed was this: there's a module for processing images, a module for processing text, a module for processing audio, each separate, and they merge somewhere at the end. But that wasn't it.

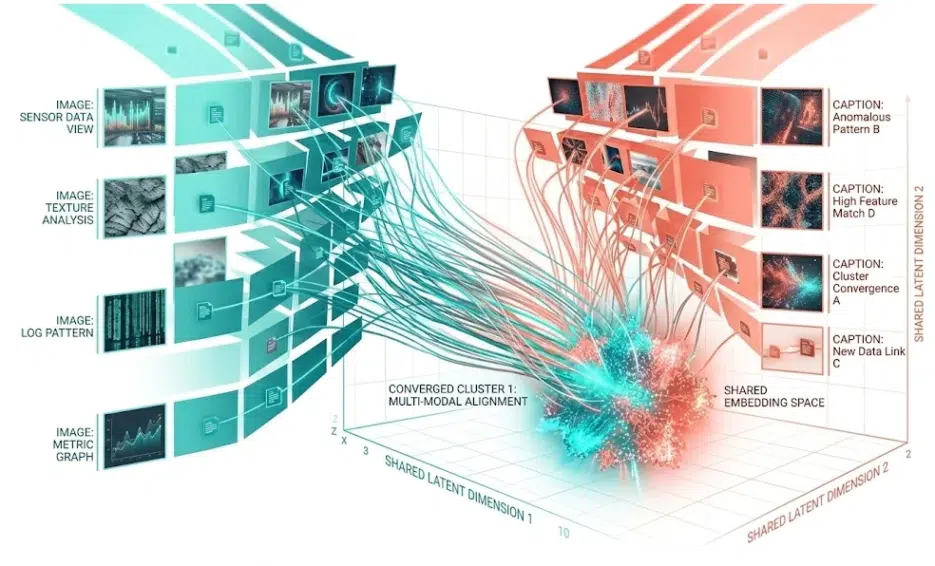

The key insight is that regardless of the input type, everything gets converted into the same kind of numerical cluster called an embedding vector. A photo of a dog, the text "dog," a voice saying "dog" — all three land at nearly the same coordinates in a high-dimensional mathematical space. Our brains do something similar too, converting light and sound into electrical signals for processing. Same principle.

Researchers call this cross-modal reasoning.

A Quick Look at the Architecture

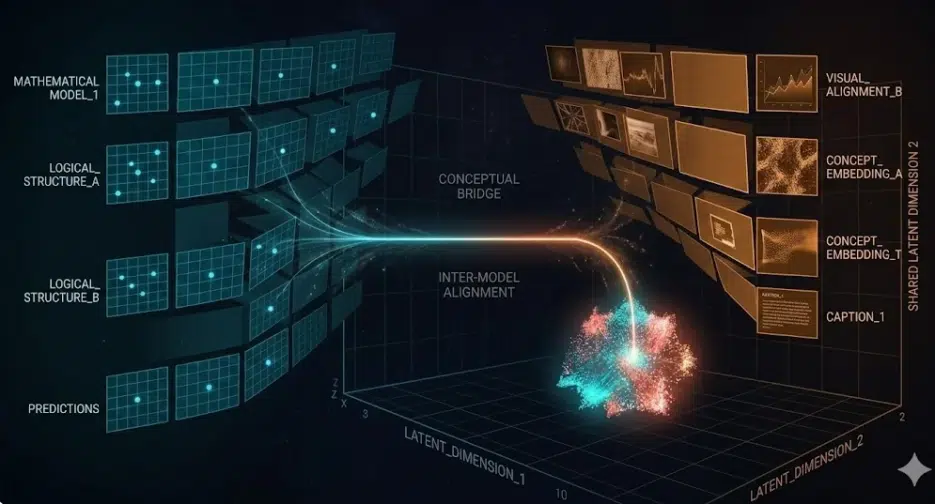

The internal structure of a multimodal LLM has three parts: encoders that convert each input into vectors, a projection layer that aligns different vector spaces into one, and the language model backbone (things like GPT, LLaMA) that handles the final reasoning.

The fact that the projection layer is just a single linear transformation is surprisingly simple — but I'll come back to that later.

ViT — Where I Got Stuck for a While

This is the part I spent the longest on in this post.

In 2020, a Google Research team wrote a paper called "An Image is Worth 16x16 Words." After being presented at ICLR 2021, its citation count exceeded tens of thousands. But what drew me to this paper wasn't the citations — it was the title. There's an English saying "An image is worth a thousand words," and they twisted it to 16x16. The sense of pulling people in with just this one title is truly impressive. It's a case that shows even among researchers, a paper title is marketing. I wish I could write blog titles like that...

Anyway, getting back to the point — here's what this paper did.

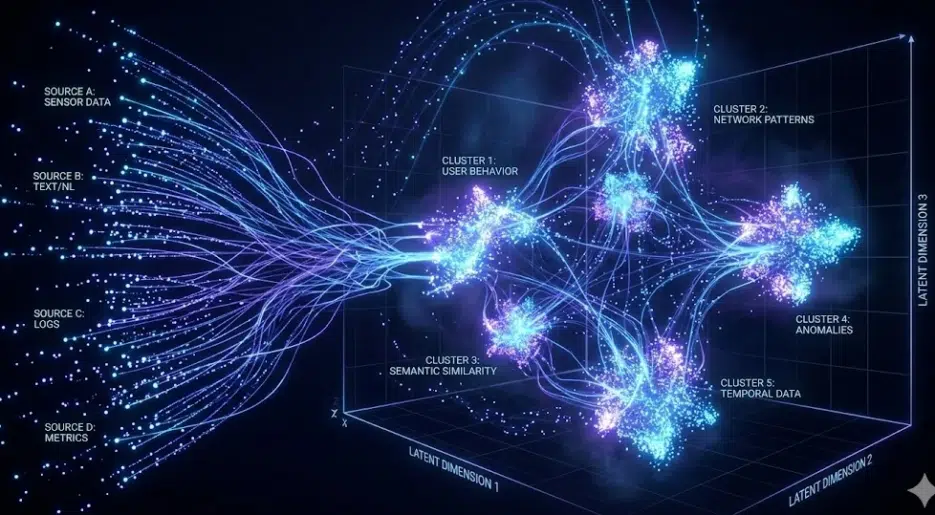

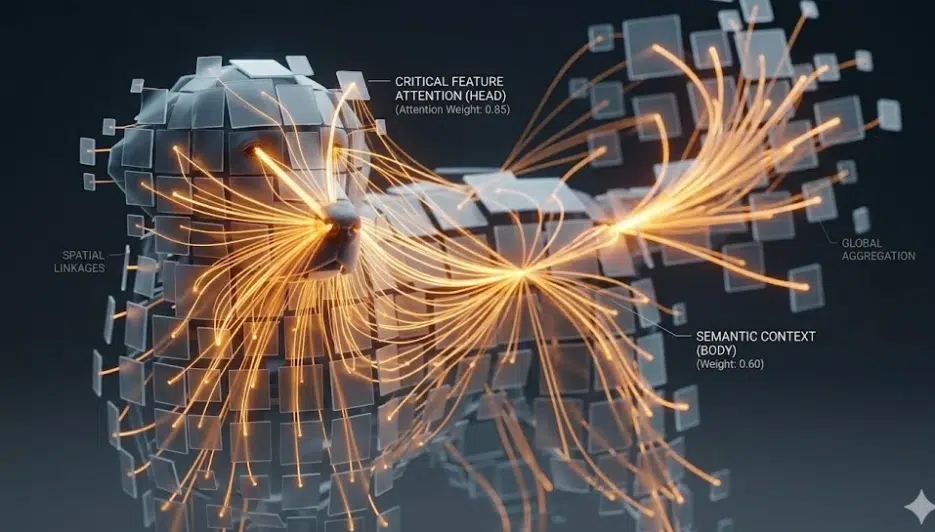

It cuts a 224x224 pixel image into 16x16 patches. That gives you 14x14 = 196 pieces. Each piece gets flattened into a 1D vector, position information (positional embedding) like "I'm the third piece from the top left" gets attached, and it goes into a transformer. It's exactly the same logic as splitting a sentence into word tokens and feeding them to a model in NLP — just applied to images.

What attention does here — and this is my favorite part — is that a dog ear patch by itself is just "a brown pixel cluster." But by referencing nearby face patches and body patches, it learns "this is a Golden Retriever's ear." As it passes through more layers, context accumulates, and by the last layer, it understands "a Golden Retriever sitting on a beach." Each individual patch is a meaningless pixel fragment, but meaning emerges as it passes through attention. Isn't that incredibly cool? Am I the only one getting excited?

A question that immediately came to mind while reading this — "What happens if you change the patch size?" What if it's 32x32 instead of 16x16? Or 8x8? There's a comparison in the paper around Table 5 or 6 (I forgot the exact number) — the smaller the patches, the finer the information, but the computation grows quadratically. With 8x8, you get 784 tokens, and since self-attention is O(n²), it becomes unmanageable. The 16x16 was the sweet spot between performance and cost, but honestly, this was a compromise born from hardware limitations of 2020, not an absolute answer. Recent models sometimes use variable patch sizes too.

Also, ViT loses to CNNs when data is scarce. The paper acknowledges this. On "medium-sized" datasets like ImageNet, accuracy was lower than a similarly-sized ResNet. You need to pre-train on monster datasets like JFT-300M with 300 million images before ViT starts dominating CNNs. Training ViT from scratch for a personal project? Realistically not feasible.

The reason I'm writing so much about ViT is that when I was running Stable Diffusion, I once wondered "How does this model recognize images?" At the time I just assumed it used CNNs and moved on, but it turns out most recent multimodal models are ViT-based. I wish I'd looked into it back then.

CLIP

I'll keep CLIP brief.

Traditional vision models were trained on human-labeled data, but OpenAI's CLIP trained on 400 million image-caption pairs scraped from the internet. It uses a method called contrastive learning — in each batch, matching image-text pairs have their vectors pushed closer together, while mismatched pairs are pushed apart. The key is learning semantic connections without labeling.

Audio is continuous data, unlike text or images. Sampling a 30-second audio clip at 16,000Hz gives you 480,000 data points — feed that directly into a transformer and it'll blow up.

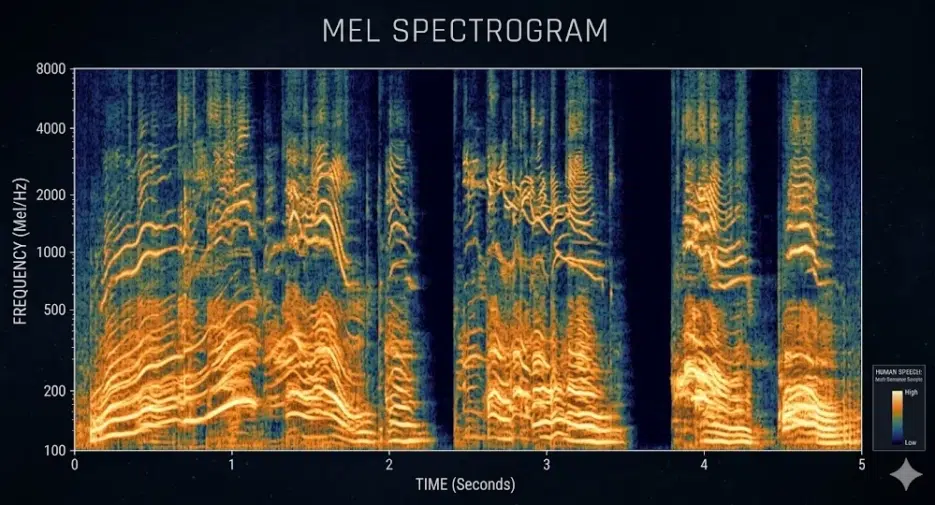

So it gets converted to a mel spectrogram. You chop the audio into 25-millisecond chunks, apply FFT to extract frequency components, and map them to a mel scale tuned to human hearing characteristics, resulting in a 2D heatmap of time × frequency. A 30-second clip becomes roughly an 80×3,000 grid.

From here on, it's the same as image processing. Split into patches and feed into a transformer. OpenAI's Whisper trained this way on 680,000 hours of multilingual audio.

But let me go on a tangent for a moment — the mel scale is quite an interesting thing. Human ears distinguish frequency differences well at low frequencies but become less sensitive at higher frequencies. You can easily tell the difference between 1,000Hz and 1,100Hz, but can barely notice the difference between 8,000Hz and 8,100Hz. The mel scale reflects this nonlinear human hearing by allocating more resolution to the low-frequency range — meaning AI audio models don't "hear like humans" but rather "prioritize the regions humans hear best." This distinction seems surprisingly important but doesn't get mentioned much.

Anyway, back to the main topic.

Training: Freeze and Thaw

Training happens in 2 stages. First, the vision encoder and language model are kept frozen while only the projection layer is trained to establish alignment. Second, the projection layer and language model are both unfrozen and trained together on conversational instruction data. The funny part is that in the second stage, GPT-4 was used to generate synthetic training data — though I'm not sure exactly where I read this. Was it the LLaVA paper or InstructBLIP... Anyway, it's basically AI writing educational materials for AI.

The Deferred Topic — Projection Layer

I mentioned earlier that the projection layer is surprisingly simple — let me explain that here.

The "cat" vector extracted by the vision encoder and the "cat" vector understood by the language model exist in completely different mathematical spaces. The dimensions are different, and the entire system of what the vectors represent is different. The projection layer aligns these into a single space — and it's just one linear transformation. A single matrix multiplication. At most, a small 2-layer MLP.

If you look at Figure 1 of the LLaVA paper, the architecture is shown and that's really all there is. I thought "No way" and checked the code — it was real.